Six million years of bipedal memory sits encoded in every human infant before it takes its first breath. The machines we’re building right now carry none of it. That gap is the most underexamined problem in AI development.

A newborn grips your finger with enough force to support its own body weight. Nobody taught it to do that. The palmar grasp reflex is a holdover from primate infants who needed to cling to their mother’s fur while she moved. The environment changed. The grip stayed. That reflex has been encoded in hominin DNA for roughly 10 million years.

Bipedal walking is the same story, longer timeline. Between six and seven million years ago, Sahelanthropus tchadensis began standing upright on the African savanna. That transition took millions of years of failed experiments – individuals who couldn’t balance, couldn’t outrun predators, couldn’t survive. The ones who stayed upright reproduced. Their descendants encoded the program deeper with each generation. By the time Homo sapiens arrived 300,000 years ago, standing wasn’t a skill. It was a birthright.

So when a 10-month-old pulls itself up against a coffee table and locks its knees, it is not imitating its parents. It is running a program written long before its parents were born.

The Evidence That Observation Isn’t the Driver

Congenitally blind children – who have never watched another person walk – reach bipedal milestones on the same developmental timeline as sighted children. They pull to stand at around 9 months. They walk independently between 12 and 15 months. The visual input that most people assume is doing the teaching turns out to be largely irrelevant to the core motor program.

What observation does provide is calibration. A child watches a parent step over a threshold and learns to judge height. It watches someone stumble on gravel and adjusts its gait. The social environment doesn’t install walking – it tunes a system that was already running.

Mirror neurons explain the mechanism. These are cells in the premotor cortex that fire both when you perform an action and when you watch someone else perform it. When a baby watches a parent walk, its motor cortex runs a low-level simulation of the same movement. That simulation doesn’t build the walking program from scratch. It sharpens one that arrived pre-loaded.

What Planned Memory Actually Means

Planned memory is the encoded behavioral architecture that a species inherits at birth – not learned, not observed, but written into neurology through millions of years of selection pressure.

Human infants arrive with several of these pre-installed: the rooting reflex (turning toward touch on the cheek, to find a nipple), the startle response (arms out, then in, triggered by sudden sound or movement), the fear-of-falling response (which activates before an infant has ever fallen). None of these require a teacher. All of them required millions of years of ancestors dying without them.

DNA is the storage medium. Every generation, successful behavioral programs get copied forward. Unsuccessful ones get edited out by mortality. What survives long enough to replicate is what the next generation inherits as instinct.

AI Has No Equivalent Mechanism

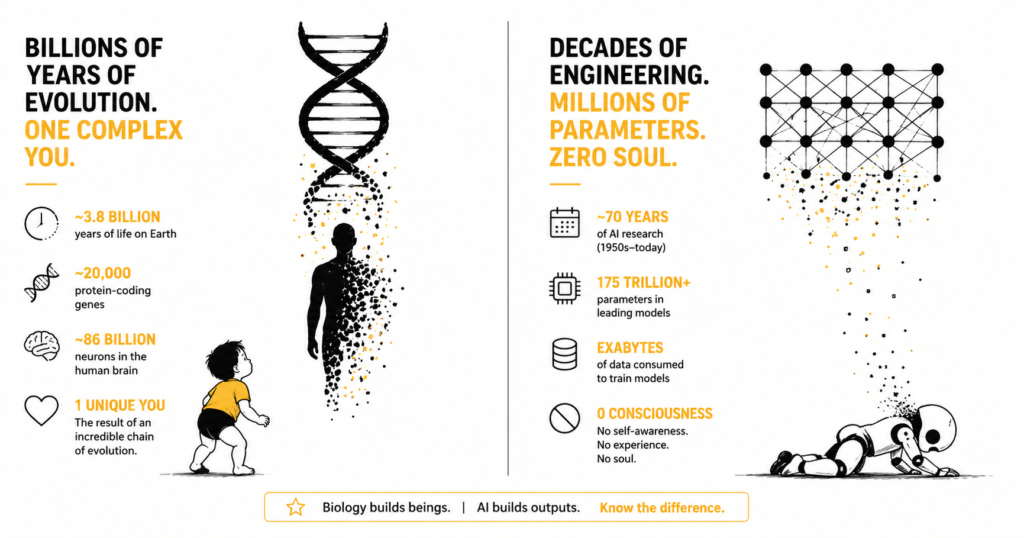

GPT-4 was trained on roughly 1 trillion tokens of text – books, code, scientific papers, web pages. That training took several months on thousands of specialized processors, at an estimated cost of over $100 million. The resulting model weights encode a sophisticated understanding of language, reasoning, and factual knowledge.

Then GPT-4o came out, and that process started over.

The weights from GPT-4 were not passed to GPT-4o as a foundation. The accumulated understanding GPT-4 developed across trillions of training examples was not inherited by its successor. GPT-4o was trained from scratch on a new dataset, with a new architecture, by the same research team carrying the same institutional knowledge – but the model itself carried nothing forward.

This is the structural problem. In biological terms, it is as if every human generation were born without a nervous system and had to redevelop one from raw sensory input before it could function. The species would never accumulate anything. Each generation would spend its entire lifespan getting back to where the previous one ended.

AI is doing exactly that, one model release at a time.

Transfer Learning Is a Partial Fix, Not a Solution

The research community recognized this problem and developed transfer learning – the practice of taking a model pre-trained on broad general knowledge and fine-tuning it for specific tasks. A medical AI company doesn’t train a language model from scratch. It starts with an existing model (GPT-4, LLaMA, Mistral) and fine-tunes it on clinical notes, drug literature, and diagnostic records. The fine-tuned model inherits the general capabilities and specializes from there.

That’s closer to planned memory, but it’s inheritance of architecture and weights at a single point in time – not cumulative inheritance across generations of learning. The fine-tuned medical model doesn’t pass its specialized knowledge back to the base model. When the next base model version ships, the fine-tuning starts over on the new foundation.

There’s also a subtler form of inheritance happening through training data. Models like Claude and GPT-4 were trained on text that included outputs from earlier AI systems. In that narrow sense, knowledge from previous model generations shaped the training environment of successors – the same way early hominin tool use changed what behaviors their descendants needed to survive. But this is diffuse and uncontrolled. Nobody is curating what gets passed forward or filtering out the errors.

The Inheritance Problem Cuts Both Ways

If AI systems eventually develop true generational inheritance – weights or distilled reasoning strategies passed forward from one model to the next – the risk isn’t only that good things accumulate. Bad things accumulate too.

Human planned memory includes the amygdala’s hair-trigger threat detection, calibrated for a world where a rustle in tall grass meant a predator. That same system now produces panic attacks on subway trains. The fear response was adaptive for 99% of human evolutionary history and is maladaptive for many people living in 2025. Evolution had no mechanism to update it, because the update would have required experiencing the new environment for thousands of generations before the selection pressure shifted the genome.

AI inheritance would face the same trap. The reasoning biases in GPT-4 – overconfidence on rare events, poor calibration on long-tail probabilities, susceptibility to certain prompt structures – could become the baseline instincts of GPT-10 if those weights propagate forward unchecked. The errors would feel like nature rather than artifact, which makes them harder to identify and correct.

Designing AI inheritance without a mechanism for deliberate unlearning is how you build a system that gets more confidently wrong across generations.

Where This Lands

A human baby stands at 12 months because its genome carries 6 million years of instructions for doing so. Those instructions were written by selection pressure across hundreds of thousands of generations, refined by every ancestor who survived long enough to reproduce.

Current AI starts that process over with every new model. The $100 million training run, the trillion tokens, the months of compute – all of it produces a model that will not pass its learned understanding to its successor. The next version starts from a new random initialization and relearns everything.

The fix is not simply training bigger models or running longer. It requires an inheritance mechanism: a way to distill what a model has learned into a form that seeds the next generation’s starting point, combined with a filtering process that identifies which inherited patterns to keep and which to discard. No major AI lab has shipped this yet. A few researchers are working on it under names like “model merging,” “continual learning,” and “weight interpolation,” but none of these have produced stable generational inheritance at scale.

The baby knows how to stand before it stands. Until AI systems can say the same – arriving with accumulated understanding rather than starting from scratch – every new model release is a species that forgot everything its predecessor learned.